Admins

Adminssection dedicated to material for admins

Backup

BackupBefore running the following script you need to setup a chore with a TI process to execute that line in Prolog:

SaveDataAll;

/!\ Avoid using the SaveTime setting in tm1s.cfg as it could conflict with other chores/processes trying to run at the same time

here is the DOS backup script that you can schedule to backup your TM1 server

netsvc /stop \\TM1server "TM1 service" sleep 300 rmdir "\\computer2\path\to\backup" /S /Q mkdir "\\computer2\path\to\backup" xcopy "\\TM1server\path\to\server" "\\computer2\path\to\backup" /Y /R /S xcopy "\\TM1server\path\to\server\}*" "\\computer2\path\to\backup" /Y /R /S netsvc /start \\TM1server "TM1 service"

Beware of Performance Monitor defaults spamming transaction log

Beware of Performance Monitor defaults spamming transaction logThe Performance Monitor in TM1 is a great tool to gather helpful statistics about your system and pinpoint all sorts of bottlenecks.

You can start the Performance Monitor in 2 ways:

- In Server Explorer -> Server -> Start Performance Monitor

- add PerformanceMonitorOn=T in tm1s.cfg, this is a dynamic parameter, it will come into action within 60 seconds

Once performance monitor is turned on, it starts populating the }Stats control cubes and it updates them every minute.

Well, so far so good...

BUT by default in TM1, ALL cubes in }CubeProperties are set to log ALL transactions. That seems like a sane default, nobody wants to lose track of whom, when and by how much changed a cell in a cube.

So by default, the Performance Monitor will start spamming the transaction log, every minute of your entire server uptime. The problem is most acute with }StatsByCube, it logs one line for every cube, and for every }Element_Attributes_dimension cube.

There is a simple remedy: turn off logging in }CubeProperties for all }Stats cubes (IBM documentation is incomplete)

A quick Turbo Integrator process can do the job too:

CellPutS('NO', '}CubeProperties', '}StatsByClient', 'LOGGING');

CellPutS('NO', '}CubeProperties', '}StatsByCube', 'LOGGING');

CellPutS('NO', '}CubeProperties', '}StatsByCubeByClient', 'LOGGING');

CellPutS('NO', '}CubeProperties', '}StatsByRule', 'LOGGING');

CellPutS('NO', '}CubeProperties', '}StatsByProcess', 'LOGGING');

CellPutS('NO', '}CubeProperties', '}StatsByChore', 'LOGGING');

CellPutS('NO', '}CubeProperties', '}StatsForServer', 'LOGGING');

The size of the spamming may only be a few megabytes per day, although it will have an impact on how long your transaction searches take. Also, the transaction log doesn't rotate for a good reason: you don't want to lose valuable transactions. So it will happily fill up the local drive and after a few months or years the server will finally crash.

If you haven't noticed the issue until now, all is not lost. You don't have to keep all this spam thanks to this bash one-liner that will clean up your 50 gigabyte logs:

for f in tm1s20*log; do awk -F, '$8 != "\"}StatsByCube\""' "$f" > "$f".tmp && mv "$f".tmp "$f"; done # one line to rule them all

You will notice it is removing only "}StatsByCube". I could have added the other }StatsBy cubes, but these are literally 2 orders of magnitude less spammy than }StatsByCube on its own.

Challenge for you: try to achieve the same as the bash one-liner above in any language in less than the 79 characters above. I made it easy, I left a lot of superfluous characters. Constructive comments and answers are welcome.

Documenting TM1

Documenting TM1section dedicated to documenting TM1 with different techniques and tools.

A closer look at chores

A closer look at choresif you ever loaded a .cho file in an editor this is what you would expect:

534,8 530,yyyymmddhhmmss ------ date/time of the first run 531,dddhhmmss ------ frequency 532,p ------ number p of processes to run 13,16 6,"process name" 560,0 13,16 533,x ------ x=1 active/ x=0 inactive

In the 9.1 series it is possible to see from the Server Explorer which chores are active from the chores menu.

However this is not the case in the 9.0 series, also it is not possible to see when and how often the chores are running unless you deactivate them first and edit them. Not quite convenient to say the least.

From the specs above, it is easy to set rules for a parser and deliver all that information in a simple report.

So the perl script attached below is doing just that: listing all chores on your server, their date/time of execution, frequency and activity status.

Procedure to follow:

- Install perl

- Save chores.pl in a folder

- Doubleclick on chores.pl

- A window opens, enter the path to your TM1 server data folder there

- Open resulting file chores.txt created in the same folder as chores.pl

Result:

ACT / date-time / frequency / chore name

X 2005/08/15 04:55:00 007d 00h 00m 00s currentweek

X 2007/04/28 05:00:00 001d 00h 00m 00s DailyS

X 2007/05/30 05:50:00 001d 00h 00m 00s DAILY_UPDATE

X 2007/05/30 05:40:00 001d 00h 00m 00s DAILY_S_UPDATE

X 2005/08/13 20:00:05 007d 00h 00m 00s eweek

X 2006/04/06 07:30:00 001d 00h 00m 00s a_Daily

X 2007/05/30 06:05:00 001d 00h 00m 00s SaveDataAll

X 2007/05/28 05:20:00 007d 00h 00m 00s WEEKLY BUILD

X 2005/05/15 21:00:00 007d 00h 00m 00s weeklystock

2007/05/28 05:30:00 007d 00h 00m 00s WEEKLY_LOAD

A closer look at subsets

A closer look at subsetsif you ever loaded a .sub file (subset) in an editor this is the format you would expect:

283,2 start

11,yyyymmddhhmmss creation date

274,"string" name of the alias to display

18,0 ?

275,d d = number of characters of the MDX expression stored on the next line

278,0 ?

281,b b = 0 or 1 "expand above" trigger

270,d d = number of elements in the subset followed by the list of these elements, this also represents the set of elements of {TM1SubsetBasis()} if you have an MDX expression attached

These .sub files are stored in cube}subs folders for public subsets or user/cube}subs for private subsets.

Often a source of discrepancy in views and reports is the use of static subsets. For example a view was created a while ago, displaying a bunch of customers, but since then new customers got added in the system and they will not appear in that view unless they are manually added to the static subset.

Based on the details above, one could search for all non-MDX/static subsets (wingrep regexp search 275,$ in all .sub files) and identify which might actually need to be made dynamic in order to keep up with slowly changing dimensions.

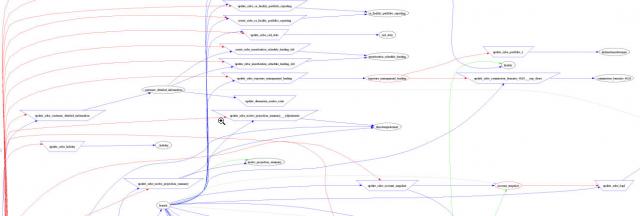

Beam me up Scotty: 3D Animated TM1 Data Flow

Beam me up Scotty: 3D Animated TM1 Data FlowExplore the structure of your TM1 system through the Skyrails 3D interface:

If you do not have flash, you can have a look at some screenshots

/!\ WARNING: your eyeballs may pop out!

This is basically the same as the previous work with graphviz, except this time it is pushed to 3D, animated and interactive.

So the visualisation engine Skyrails is developed by Ph.D. student Yose Widjaja.

I only wrote the TM1 parser and associated Skyrails script to port a high level view of the TM1 Data flow into the Skyrails realm.

How to proceed:

- Download and unzip skyrails beta 2nd build

- Download and unzip TM1skyrails.zip (attachment below) in the skyraildist2 folder

- In the skyraildist2 folder, doubleclick TM1skyrails.pl (you will need perl installed unless someone wants to provide a compiled .exe of the script with the PAR module)

- Enter the path to (a copy of) your TM1 Data folder

- Skyrails window opens, click on the "folder" icon and click TM1

- If you don't want to install perl, you can still enjoy a preview of the Planning Sample that comes out of the box. Just double-click on raex.exe.

w,s,a,d keys to move the camera

Quick legend:

orange -- cube

blue -- process

light cyan -- file

red -- ODBC source

green sphere -- probably reference to an object that does not exists (anymore)

green edge: intercube rule flow

red edge: process (CellGet/CellPut) floww

Changelog and Downloads:

1.0

1.1 a few mouse gestures added (right click on a node then follow instructions) to get planar (like graphviz) and spherical representations.

1.2 - edges color coded, see legend above

- animated arrows

- gestures to display different flows (no flow/rules only/processes only/all flow)

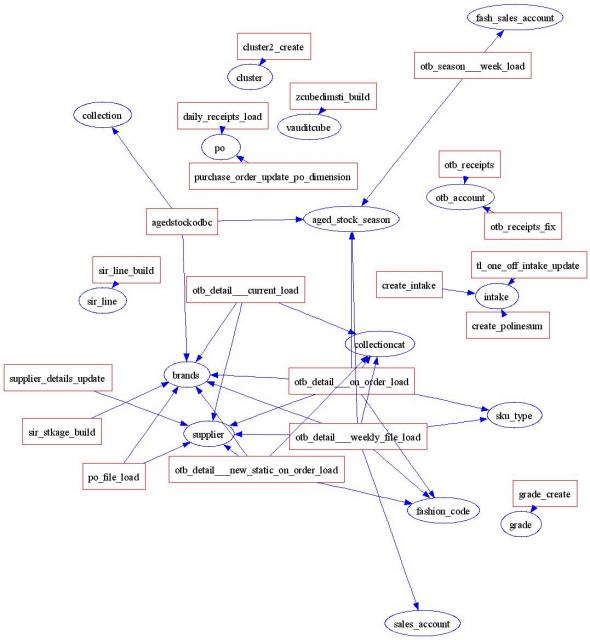

Dimensions updates mapping

Dimensions updates mappingWhen faced with a large "undocumented" TM1 server, it might become hard to see how dimensions are being updated.

The following perl/graphviz script creates a graph to display which processes are updating dimensions.

The script dimflow.pl is looking for functions updating dimensions (DimensionElementInsert, DimensionCreate...) in .pro files in the TM1 datafolder and maps it all together.

Unfortunately it does not take into account manual editing of dimensions.

This is the result:

Legend:

processes = red rectangles

dimensions = blue bubbles

The above screenshot is probably a good example of why such map can be useful: you can see immediately that several processes are updating the same dimensions.

It might be necessary to have several processes feeding a dimension, though it will be good to review these processes to make sure they are not redundant or conflicting.

Procedure to follow:

- Install perl and graphviz

- Download the script below and rename it to .pl extension

- Doubleclick on it

- Enter the path to your TM1 Data folder (\\servername\datafolder)

- This will create 2 files "dim.dot" and "dim.gif" in the same folder as the perl script

- Open dim.gif with any browser / picture editor

Duplicate Elements in Rollups

Duplicate Elements in RollupsLarge nested rollups can lead to elements being counted twice or more within a rollup.

The following process helps you to find, across all dimensions of your system, all rollups that contain elements consolidated more than once under the rollup.

The code isn't the cleanest and there are probably better methods to achieve the same result so don't hesitate to edit the page or comment.

It proceeds like this:

loop through all dimensions

loop through all rollups of a dimension

if(number of distinct elements in rollup <> number of elements in rollup)

then loop through all alphanumerically sorted elements of the rollup

duplicates are found next to each other

The process takes less than 30 sec to run on a 64bit 9.1.4 system with 212 dimensions, one of the dimensions holds more than a 1000 consolidations and some "decent" nesting (9 levels).

/!\ This process will not work with some TM1 versions because of the MDX function DISTINCT, it has been successfully tested on v. 9.1.4.

DISTINCT does not work properly when applied to expanded rollups on v. 9.0.3

You can just copy/paste the following code in the Prolog window of a process.

Just edit the first line to dump the results where you would like to see them.

/!\ The weight of the duplicated elements is also shown, just in case identical elements cancel each other out with opposite weights.

report = 'D:\duplicate.elements.csv';

#config the line above.

#caveat: empty or consolidations-only dimensions will make this process fail, because empty subsets cannot be created

allrollups = '}All rollups';

subset = '}compare';

maxDim = DimSiz('}Dimensions');

i = 1;

#for each dimension

while (i <= maxDim);

vDim = DIMNM('}Dimensions',i);

#test if there are any rollups

#asciioutput(report, vDim);

SubsetDestroy(vDim, allrollups);

SubsetCreatebyMDX(allrollups,'{TM1FILTERBYLEVEL( {TM1SUBSETALL([' | vDim | '])},0)}');

If(SubsetGetSize(vDim,allrollups) <> DimSiz(vDim));

#get a list of all rollups SubsetDestroy(vDim, allrollups);

SubsetCreatebyMDX(allrollups,'{EXCEPT(TM1SUBSETALL([' | vDim | ']), TM1FILTERBYLEVEL( {TM1SUBSETALL([' | vDim | '])},0))}');

maxRolls = SubsetGetSize(vDim,allrollups);

rollup = 1;

#for each rollup in that dimension while (rollup <= maxRolls);

Consolidation = SubsetGetElementName(vDim, allrollups, rollup);

If(SubsetExists(vDim, subset) = 1);

SubsetDestroy(vDim, subset);

Endif;

SubsetCreatebyMDX(subset, '{DISTINCT(TM1DRILLDOWNMEMBER( {[' | vDim | '].[' | Consolidation | ']}, ALL, RECURSIVE))}' );

Distinct = SubsetGetSize(vDim, subset);

SubsetDestroy(vDim, subset);

SubsetCreatebyMDX(subset, '{TM1DRILLDOWNMEMBER( {[' | vDim | '].[' | Consolidation | ']}, ALL, RECURSIVE)}' );

All = SubsetGetSize(vDim, subset);

if(All <> Distinct);

SubsetDestroy(vDim, subset);

#alphasort consolidation elements so duplicates are next to eachother and a 1-pass is enough to find them all

SubsetCreatebyMDX(subset, '{TM1SORT( {TM1DRILLDOWNMEMBER( {[' | vDim | '].[' | Consolidation | ']}, ALL, RECURSIVE)},ASC) }' );

maxElem = SubsetGetSize(vDim, subset);

prevE = '';

nelem = 1;

while(nelem <= maxElem);

element = SubsetGetElementName(vDim, subset, nelem);

#if 2+ identical elements next to each other then these are the duplicates If(prevE @= element); AsciiOutput(report,'',vDim,Consolidation,element,NumberToString(ELWEIGHT(vDim,Consolidation,element)));

Endif;

prevE = element;

nelem = nelem + 1;

end;

Endif;

rollup = rollup + 1;

end;

SubsetDestroy(vDim, allrollups);

If(SubsetExists(vDim, subset) = 1);

SubsetDestroy(vDim, subset);

Endif;

Endif;

i = i + 1;

end;

Graphing TM1 Data Flow

Graphing TM1 Data FlowThis is the new version of genflow.pl a little parser written in perl that will create a input file for graphviz from your TM1 .pro and .rux files then generate a graph of the data flow in your TM1 server...

(the image has been cropped and scaled down for display, the original image is actually readable)

Legend

ellipses = cubes, rectangles = processes

red = cellget, blue = cellput, green = inter-cube rule

Procedure to follow:

- Install perl and graphviz

- Put the genflow perl script in any folder, make sure it has the .pl extension (not txt)

- Doubleclick on it

- Enter the path to your TM1 Data folder such as: \\servername\datafolder where \\servername\datafolder is the full file path to your TM1 data folder

- Hit return and wait until the window disappears

- This creates 2 files: "flow.dot" and "flow.gif" in the same folder as the perl script

- Open "flow.gif" in any browser or picture editor

Changelog

1.4:

.display import view names along the edges

.display zeroout views

.sources differentiated by shape

1.3:

.CellPut parsing fix

.cubes/processes names displayed 'as is'

This is still quite experimental but this could be useful to view at a glance high-level interactions between your inputs, processes, cubes and rules.

Indexing subsets

Indexing subsetsMaintaining subsets on your server might be problematic. For example you wanted to delete an old subset that you found out to be incorrect and your server replied this:

This is not quite helpful, as it does not say which views are affected and need to be corrected.

Worse is that, as Admin, you can delete any public subset as long as it is not being used in a public view. If it is used in a user's private view, it will be deleted anyway and that private view might become invalid or just won't load.

In order to remediate to these issues, I wrote this perl script indexsubset.pl that will:

- Index all your subsets, including users' subsets.

- Display all unused subsets (i.e. not attached to any existing views)

From the index, you can find out right away in which views a given subset is used.

I suppose the same could be achieved through the TM1 API though you would have to log as every user in turn in order to get a full index of all subsets.

Run from a DOS shell: perl indexsubset.pl \\path\to\TM1\server > mysubsets.txt

Processes running history

Processes running historyOn a large undocumented and mature TM1 server you might find yourself with a lot of processes and you wonder how many of them are still in use or the last time they got run.

The loganalysis.pl script answers these questions for you.

One could take a look at the creation/modification time of the processes in the TM1 Data folder however you would have to sit through pages of the tms1msg.log to get the history of a given process which is what the script below does.

Procedure to follow for TM1 9.0 or 8.x

- Install perl

- Save loganalysis.pl in a folder

- Stop your TM1 service (necessary to beat the windows lock on tm1smsg.log)

- Copy the tm1smsg.log into the same folder where loganalysis.pl is

- Start your TM1 service

- Double click loganalysis.pl

Procedure to follow for TM1 9.1 and above

- Install perl

- Save loganalysis.pl in a folder

- Copy the tm1server.log into the same folder where loganalysis.pl is

- Double click loganalysis.pl

That should display the newly created processes.txt in notepad and that should look like the following:

First, all processes sorted by name and the last run time, user and how many times it ran.

processes by name: 2005load run 2006/02/09 15:02:33 user Admin [x2] ADMIN - Unused Dimensions run 2006/04/26 14:02:58 user Admin [x1] Branch Rates Update run 2006/10/19 15:23:29 user Admin [x1] BrandAnalysisUpdate run 2005/04/11 08:09:13 user Admin [x33] ....

Second, all processes sorted by last run time, user and how many times it ran.

processes by last run: 2005/04/11 08:09:13 user Admin ran BrandAnalysisUpdate [x33] 2005/04/11 10:26:29 user Admin ran LoadDelivery [x1] 2005/04/19 08:44:22 user Admin ran UpdateAntStockage [x19] 2005/04/26 14:18:17 user Admin ran weeklyodbc [x1] 2005/05/12 08:34:16 user Admin ran stock [x1] 2005/05/12 08:37:59 user Admin ran receipts [x1] ....

The case against single children

The case against single childrenI came across hierarchies holding a single child.

While creating a consolidation over only 1 element might make sense in some hierarchies, some people just use consolidations as an alternative to aliases.

Either they just don't know they exist or they come from an age when TM1 did not have aliases yet.

The following process will help you identify all the "single child" elements in your system.

This effectively loops through all elements of all dimensions of your system, so this could be reused to carry out other checks.

#where to report the results

Report = '\\tm1server\reports\single_children.csv';

#get number of dimensions on that system

TotalDim = Dimsiz('}Dimensions');

#loop through all dimensions

i = 1;

While (i <= TotalDim);

ThisDim = DIMNM('}Dimensions',i);

#foreach dimension

#loop through all their elements

j = 1;

While (j <= Dimsiz(ThisDim));

Element = DIMNM(ThisDim,j);

#report the parent if it has only 1 child

If( ELCOMPN(ThisDim, Element) = 1 );

AsciiOutput(Report,ThisDim,Element,ELCOMP(ThisDim,Element,1));

Endif;

#report if consolidation has no child!!!

If( ELCOMPN(ThisDim, Element) = 0 & ELLEV(Thisdim, Element) > 0 );

single = single + 1;

AsciiOutput(Report,ThisDim,DIMNM(ThisDim,j),'NO CHILD!!');

Endif;

j = j + 1;

End;

i = i + 1;

End;

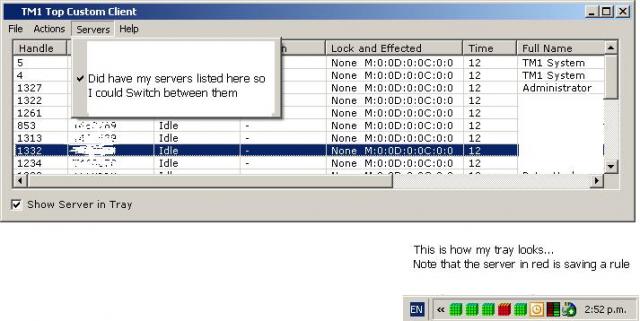

Dynamic tm1p.ini and homepages in Excel

Dynamic tm1p.ini and homepages in ExcelPointing all your users to a single TM1 Admin host is convenient but not flexible if you manage several TM1 services.

Each TM1 service might need different settings and you do not necessarily want users to be able to see the development or test services for example.

mytm1.xla is an Excel addin that logs the users on a predefined server and settings as shown on that graph:

With such a setup, you can switch your users from one server to the other without having to tinker the tm1p.ini files on every single desktop.

This solution probably offers the most flexibility and maintainability as you could add conditional statements to point different groups of users to different servers/settings and even manage and retrieve these settings from a cube through the API.

This addin also includes:

- previous code like the "TM1 freeze" button

- it loads automatically an excel spreadsheet named after the user so each user can customise it with their reports/links for a faster access to their data.

The TM1 macro OPTSET, used in the .xla below, can preconfigure the tm1p.ini with a lot more values.

The official TM1 Help does not reference all the available values though.

Here is a more complete list, you can actually change all the parameters displayed in the Server Explorer File->Options with OPTSET:

AdminHost

DataBaseDirectory

IntegratedLogin

ConnectLocalAtStartup

InProcessLocalServer

TM1PostScriptPrinter

HttpProxyServerHost

HttpProxyServerPort

UseHttpProxyServer

HttpConnectorUrl

UseHttpConnector

and more:

AnsiFiles

GenDBRW

NoChangeMessage

DimensionDownloadMaxSize

this also applies to OPTGET

WARNING:

Make sure that all hosts in the AdminHost line are up and working otherwise Architect/Perspectives will hang for a couple of seconds while trying to connect to these hosts.

Locking and updating locked cubes

Locking and updating locked cubesLocking cubes is a good way to insure your (meta)data is not tampered with.

Right click on the cube you wish to lock, then select Security->Lock.

This now protects the cube contents from TI process and (un)intentional admins' changes.

However, this makes updating your (meta)data more time consuming, as you need to remove the lock prior to updating the cube.

Hopefully, the function CubeLockOverride allows you to automate that step. The following TI code demonstrates this,

.lock a cube

.copy/paste the code in a TI Prolog tab

.change the parameters to fit your system

.execute:

# uncomment / comment the next line to see the process win / fail

CubeLockOverride(1);

Dim = 'Day';

Element = 'Day 01';

Attribute = 'Dates 2010';

NewValue = 'Saint Glinglin';

if( CellIsUpdateable('}ElementAttributes_' | Dim, Element, Attribute) = 1);

AttrPutS(NewValue, Dim, Element, Attribute);

else;

ItemReject('could not unlock element ' | Element | ' in ' | Dim);

endif;

Note: CubeLockOverride is in the reserved words listed in the TM1 manual but its function seems to be only documented in the 8.4.5 releases notes.

This works from 8.4.5 to the more recent 9.x series

Managing the licenses limit

Managing the licenses limitOne day you might face or already faced the problem of too many licences being in use and as a result additional users cannot log in.

Also on a default setup, nothing stops users from opening several tm1web/perspectives sessions and reach the limit of licenses.

So in order to prevent that:

.open the cube }ClientProperties, change all users' MaximumPorts to 1

.in your tm1s.cfg add that line, it will timeout all idle connections after 1 hour:

IdleConnectionTimeOutSeconds = 3600

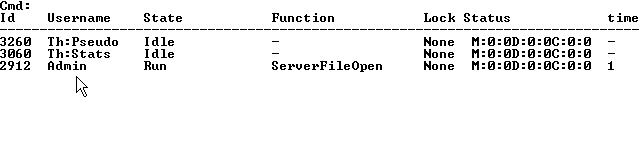

To see who's logged on:

.use tm1top

or

.open the cube }ClientProperties

all logged users have the STATUS measure set to "ACTIVE"

or

.in server manager (rightclick server icon), click "Select clients..." to get the list

To kick some users without taking the server down:

in server explorer right click on your server icon -> Server Manager

select disconnect clients and "Select clients..."

then OK and they are gone.

Unfortunately there is still no workaround for the admin to log in when users take all the slots allowed.

Monitor rules and processes

Monitor rules and processesChanging a rule or process in TM1 does not show up in the logs.

That is fine as long as you are the only Power User able to tinker with these objects.

Unfortunately, it can get out of hand pretty quickly as more power users join the party and make changes that might impact other departments data.

So here goes a simple way to report changes.

The idea is to compare the current files on the production server with a backup from the previous day.

You will need:

- Access to the live TM1 Data Folder

- Access to the last daily backup

- A VB script to email results you can find one there

- diff, egrep and unix2dos

Save these files in D:\TM1DATA\BIN for example, or some path accessible to the TM1 server.

In the same folder create a diff.bat file, replace all the TM1DATA paths to your configuration:

@echo off cd D:\TM1DATA\BIN del %~1 rem windows file compare fc is just crap, must fallback to the mighty GNU binutils diff -q "\\liveserver\TM1DATA" "\\backupserver\TM1DATA" | egrep "\.(pro|RUX|xdi|xru|cho)" > %~1 rem make it notepad friendly, i.e. add these horrible useless CR chars at EOL, it's 2oo8 but native windows apps are just as deficient as ever unix2dos %~1 rem if diff is not empty then email results if %~z1 GTR 1 sendattach.vbs mailserver 25 from.email to.email "[TM1] daily changes log" " " "D:\TM1DATA\BIN\%~1"

Now you can set a TM1 process with the following line to run diff.bat and schedule it from a chore.

ExecuteCommand('cmd /c D:\TM1DATA\BIN\diff.bat diff.txt',0);

Best is to run the process at close of business, just before creating the backup of the day.

And you should start receiving emails like these:

Files \\liveserver\TM1DATA\Check Dimension CollectionCat.pro and \\backupserver\TM1DATA\Check Dimension CollectionCat.pro differ Files \\liveserver\TM1DATA\Productivity.RUX and \\backupserver\TM1DATA\Productivity.RUX differ Only in \\liveserver\TM1DATA: Update Cube Branch Rates.pro

In this case we can see that the rules from the Productivity cube have changed today.

Monitoring chores by email

Monitoring chores by emailUsing the script in the Send Email Attachments article, it is possible to set it up to automatically email the Admin when a process in a chore fails.

Here is how to proceed:

1. Setup admin email process

First we create a process to add an email field to the ClientProperties cube and add an email to forward to the Admin.

1.1 create a new process

---- Advanced/Parameters Tab, insert this parameter:

AdminEmail / String / / "Admin Email?"

--- Advanced/Prolog tab

if(DIMIX('}ClientProperties','Email') = 0);

DimensionElementInsert('}ClientProperties','','Email','S');

Endif;

--- Advanced/Epilog tab

CellPutS(AdminEmail,'}ClientProperties','Admin','Email');

1.2 Save and Run

2. Create monitor process

---- Advanced/Prolog tab

MailServer = 'smtp.mycompany.com';

LogDir = '\\tm1server\e$\TM1Data\Log';

ScriptDir = 'E:\TM1Data\';

NumericGlobalVariable( 'ProcessReturnCode');

If(ProcessReturnCode <> ProcessExitNormal());

If(ProcessReturnCode = ProcessExitByChoreQuit());

Status = 'Exit by ChoreQuit';

Endif;

If(ProcessReturnCode = ProcessExitMinorError());

Status = 'Exit with Minor Error';

Endif;

If(ProcessReturnCode = ProcessExitByQuit());

Status = 'Exit by Quit';

Endif;

If(ProcessReturnCode = ProcessExitWithMessage());

Status = 'Exit with Message';

Endif;

If(ProcessReturnCode = ProcessExitSeriousError());

Status = 'Exit with Serious Error';

Endif;

If(ProcessReturnCode = ProcessExitOnInit());

Status = 'Exit on Init';

Endif;

If(ProcessReturnCode = ProcessExitByBreak());

Status = 'Exit by Break';

Endif;

vbody= 'Process failed: '|Status| '. Check '|LogDir;

Email = CellGetS('}ClientProperties','Admin','Email');

If(Email @<> '');

S_Run='cmd /c '|ScriptDir|'\SendMail.vbs '| MailServer |' 25 '|Email|' '|Email|' "TM1 chore alert" "'|vBody|'"';

ExecuteCommand(S_Run,0);

Endif;

Endif;

2.1. adjust the LogDir, MailServer and ScriptDir values to your local settings

3. insert this monitor process in chore

This monitor process needs to be placed after every process that you would like to monitor.

How does it work?

Every process, after execution, returns a global variable "ProcessReturnCode", and that variable can be read by a process running right after in a chore.

The above process checks for that return code and pipes it to the mail script if it happens to be different from the normal exit code.

If you have a lot of processes in your chore, you will probably prefer to use the ExecuteProcess command and the check return code over a loop. That method is explained here.

Monitoring chores by email part 2

Monitoring chores by email part 2Following up on monitoring chores by email, we will take a slightly different approach this time.

We use a "metaprocess" to execute all the processes listed in the original chore, check their return status and eventually act on it.

This allows for maximum flexibility as you can get that controlling process to react differently to any exit status of any process.

1. Create process ProcessCheck

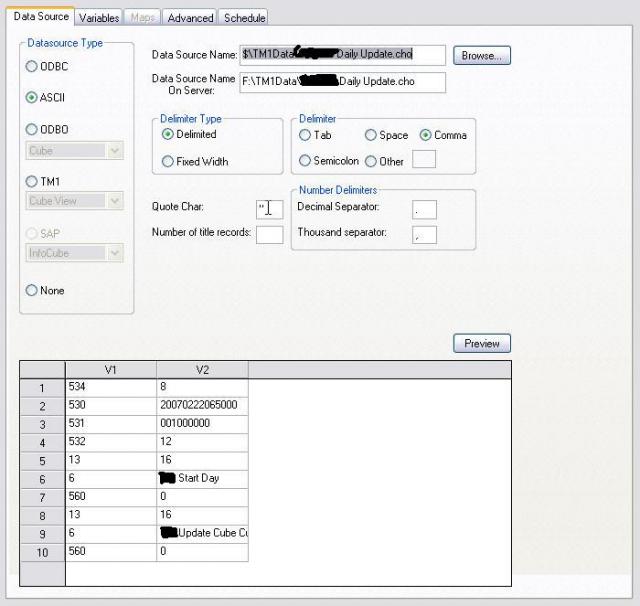

--- Data Source tab

choose ASCII, Data Source Name points to an already existing chore file, for example called Daily Update.cho

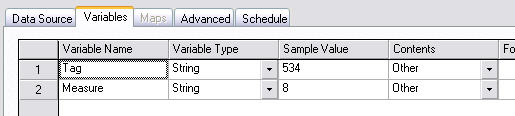

--- Variables tab

Variables tab has to be that way:

--- Advanced/Data tab

#mind that future TM1 versions might use a different format for .cho files and that might break this script

If(Tag @= '6');

MailServer = 'mail.myserver.com';

LogDir = '\\server\f$\TM1Data\myTM1\Log';

#get the process names from the deactivated chore

Process=Measure;

NumericGlobalVariable( 'ProcessReturnCode');

StringGlobalVariable('Status');

ErrorCode = ExecuteProcess(Process);

If(ErrorCode <> ProcessExitNormal());

If(ProcessReturnCode = ProcessExitByChoreQuit());

Status = 'Exit by ChoreQuit';

#Honour the chore flow so stop here and quit too

ChoreQuit;

Endif;

If(ProcessReturnCode = ProcessExitMinorError());

Status = 'Exit with Minor Error';

Endif;

If(ProcessReturnCode = ProcessExitByQuit());

Status = 'Exit by Quit';

Endif;

If(ProcessReturnCode = ProcessExitWithMessage());

Status = 'Exit with Message';

Endif;

If(ProcessReturnCode = ProcessExitSeriousError());

Status = 'Exit with Serious Error';

Endif;

If(ProcessReturnCode = ProcessExitOnInit());

Status = 'Exit on Init';

Endif;

If(ProcessReturnCode = ProcessExitByBreak());

Status = 'Exit by Break';

Endif;

vbody=Process|' failed: '|Status|'. Check details in '|LogDir;

Email = CellGetS('}ClientProperties','Admin','Email');

If(Email @<> '');

S_Run='cmd /c F:\TM1Data\CDOMail.vbs '| MailServer |' 25 '|Email|' '|Email|' "TM1 chore alert" "'|vBody|'"';

ExecuteCommand(S_Run,0);

Endif;

Endif;

Endif;

The code only differs from the first method when the process returns a ChoreQuit exit. Because we will be running the chore Daily Update from another chore, the ChoreQuit will not apply to the later, so we need to specify it explicitly to respect the flow and stop at the same point.

2. Create chore ProcessCheck

just add the process above and set it to the same frequency and time as the Daily Update chore that you want to monitor

3. Deactivate Daily Update

since the ProcessCheck chore will run the Daily Update chore there is no need to execute Daily Update another time

Monitoring users logins

Monitoring users loginsA quick way to monitor users login/logout on your system is to log the STATUS value (i.e. ACTIVE or blank) from the }ClientProperties cube.

View->Display Control Objects

Cubes -rightclick- Security Assignments

browse down to the }ClientProperties cube and make sure the Logging box is checked

tm1server -rightclick- View Transaction Log

Select Cubes: }ClientProperties

All the transactions are stored in the tm1s.log file, however if you are on a TM1 version prior to version 9.1 and hosted on a Windows server, the file will be locked.

A "Save Data" will close the log file and add a timestamp to its name, so you can start playing with it.

/!\ This trick does not work in TM1 9.1SP3 as it does not update the STATUS value.

Pushing data from an iSeries to TM1

Pushing data from an iSeries to TM1TM1 chore scheduling is frequency based, i.e. it will run and try to pull data after a predefined period of time regardless of the availability of the data at the source. Unfortunately it can be a hit or miss and it can even become a maintenance issue when Daylight Saving Time come into play.

Ideally you would need to import or get the data pushed to TM1 as soon as it is available. The following article shows one way of achieving that goal with an iSeries as the source...

prerequesites on the TM1 server:

- Mike Cowie's TIExecute

- iSeries Client Access components (iSeries Access for Windows Remote Command service)

Procedure to follow

- Drop TM1ChoreExecute, TM1ProcessExecute, associated files and the 32bit TM1 API dlls in a folder on the TM1 server (see readme in the zip for details)

- Start iSeries Access for Windows Remote Command on the TM1 server, set as automatic and select a user that can execute the TM1ChoreExecute

- In client access setup: set remote incoming command "run as system" + "generic security"

- On your iSeries, add the following command after all your queries/extracts:

RUNRMTCMD CMD('start D:\path\to\TM1ChoreExecute AdminServer TM1Server UserID Password ChoreName') RMTLOCNAME('10.xx.x.xx' *IP) WAITTIME(10)

10.xx.x.xx IP of your TM1 server

D:\path\to path where the TM1ChoreExecute is stored

AdminServer name of machine running the Admin Server service on your network.

TM1Server visible name of your TM1 Server (not the machine name of the machine running TM1.

UserID TM1 user ID with credentials to execute the chore.

Password TM1 user ID's password to the TM1 Server.

ChoreName name of requested chore to be run to load data from the iSeries.

You should consider setting a user/pass to restrict access to the iSeries remote service and avoid abuse.

But ideally an equivalent of TM1ChoreExecute should be compiled and executed directly from the iSeries.

Quick recovery from data loss

Quick recovery from data lossA luser just ran that hazardous process or spreading on the production server and as a result trashed loads of data on your beloved server.

You cannot afford to take the server down to get yesterday's backup and they need the data now...

Fear not, the transaction log is here to save the day.

- In server explorer, right click on server->View Transaction Log

- Narrow the query as much as you can to the time/client/cube/measures that you are after

- /!\ Mind the date is in north-american format mm/dd/yyyy

- Edit->Select All

- Edit->Back Out will rollback the selected entries

Alternatively, you could get the most recent backup of the corresponding .cub of the "damaged" cube:

- In server explorer: right-click->unload cube

- Overwrite the .cub with the backed up .cub

- Reload the cube from server explorer by opening any view from it

Store any type of files in the Applications folder

Store any type of files in the Applications folderThe Applications folder is great but limited to views and xls files, well not anymore.

The following explains how to make available just any file in your Applications folders.

1. create a file called myfile.blob in }Applications\ on your TM1 server

it should contain the following 3 lines:

ENTRYNAME=tutorial.pdf

ENTRYTYPE=blob

ENTRYREFERENCE=TM!:///blob/public/.\}Externals\tutorial.pdf

2. place your file, tutorial.pdf in this case, in }Externals or whatever path you defined in ENTRYREFERENCE

3. restart your TM1 service

ENTRYNAME is the name that will be displayed in Server Explorer.

ENTRYREFERENCE is the path to your actual file. The file does not need to be in the folder }Externals but the server must be able to access it

/!\ avoid large files, there is no sign to tell you to wait while loading, impatient users might click several times on the file and unvoluntarily flood the server or themselves.

/!\ add the extension in ENTRYNAME to avoid confusion, although it is not a .xls file, it will be displayed with an XLS icon.

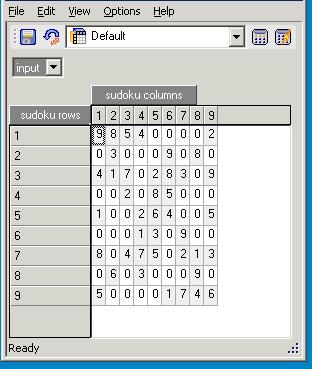

TM1 Sudoku

TM1 SudokuBeyond the purely ludic and mathematical aspects of sudoku, this code demonstrates how to set up dimensions, cubes, views, cell formating, security at elements and cells levels all through Turbo Integrator in just one process.

Thanks to this application, you can demonstrate your TM1 ROI: none of your company employees will need to shell out £1 ever again for their daily sudoku from the Times.

Alternatively, you could move your users to a "probation" group before they start their shift. It is only by completing successfully the sudoku that the users will be moved back to their original group.

This way you can insure your company employees are mentally fit to carry out changes to the budget, especially after last evening ethylic abuses down the pub.

Of course it exists many sudoku available for Excel, this is one is to be played primarily from the cube viewer, but you could also slice the view and play it from Excel too.

How to install:

.Save the processes Create Sudoku.pro and Check Sudoku.pro in your TM1 folder and reload your server or copy the code directly to new turbo integrator processes.

.Execute "Create Sudoku". That creates the cube, default view and new puzzle in less than a second.

The user can input numbers in the "input" grid only where there are zeroes. The "solution" grid cannot be read by default.

.Execute "Check Sudoku" to verify your input grid matches the solution.

If you are logged under an admin account, you will not see any cells locked, you need to be under the group defined in the process to see the cells properly locked.

You might want to change the default group allowed to play and the number of initial pairs that are blanked in order to increase difficulty.

The algorithm provided to generate the sudoku could be quickly modified to solve by brute force any sudoku. Provided the sudoku grid is valid, it will find the solution, however some sudokus with too many empty cells will have more than one solution.

TM1 services on the command line

TM1 services on the command lineremoving a TM1 service

in a DOS shell:

go to the \bin folder where TM1 is installed then:

tm1sd -remove -n "TM1 Service"

where "TM1 Service is the name of an existing TM1 service

or: sc delete "TM1 Service"

removing the TM1 Admin services

sc delete tm1admsdx64

sc delete TM1ExcelService

installing a TM1 service

in a DOS shell:

go to the \bin folder where TM1 is installed then:

tm1sd -install -n "TM1 Service" -z DIRCONFIG

where DIRCONFIG is the absolute path where the tm1s.cfg of your TM1 Service is stored

manually starting a TM1 service

from a DOS shell in the \bin folder of the TM1 installation:

tm1s -z DIRCONFIG

remotely start a TM1 service

sc \\TM1server start "TM1 service"

remotely stop a TM1 service

sc \\TM1server stop "TM1 service"

TM1Top

TM1TopTM1Top provides realtime monitoring of your TM1 server, pretty much like the GNU top command.

It is bundled with TM1 only from version 9.1. You might have to ask your support contact to get it or get Ben Hill's TM1Top below.

. Dump the files in a folder

. Edit tm1top.ini, replace myserver and myadminhost with your setup

servername=myserver adminhost=myadminhost refresh=5 logfile=C:\tm1top.log logperiod=0 logappend=T

. Run the tm1top.exe

Commands:

X exit

W write display to a file

H help

V verify/login to allow cancelling jobs

C cancel threads, you must first login to use that command

Keep in mind all it does is to insert a "ProcessQuit" command in the chosen thread.

Hence it will not work if the user is calculating a large view or a TI is stuck in a loop where it never reads the next data record, as the quit command is entered for the next data line rather than the next line of code. Then your only option becomes to terminate the user's connection with the server manager or API. (thanks Steve Vincent).

Ben "Kyro" Hill did a great job developing a very convenient GUI TM1Top.

WebSphere Liberty Profile SSL configuration for TM1Web

WebSphere Liberty Profile SSL configuration for TM1WebTL;DR Skip directly to the bottom of the article to find out the fastest and most secure SSL configuration for WLP/TM1Web. Otherwise, read on to understand the how and the why.

TM1Web also known as "Planning Analytics Spreadsheet Services" is a web app serving websheets through the IBM WebSphere Liberty Profile webserver currently operating under Java Running Environment 1.8.0.

The encryption to communicate with that WLP server is handled by a choice of ciphersuites to be configured in <TM1Web_install>\wlp\usr\webserver\server.xml

One line of particular interest regarding security and compliance is the following setting the enabled ciphers:

<ssl id="CAM" keyStoreRef="CAMEncKeyStore" serverkeyAlias="encryption" enabledciphers="SSL_ECDHE_ECDSA_WITH_AES_128_GCM_SHA256 SSL_ECDHE_ECDSA_WITH_AES_256 GCM_SHA384" sslProtocol="TLSv1.2"/>However, the IBM documentation fails to provide the list of available ciphersuites. If you are familiar with ciphersuites, it should be an easy guess after a few online searches. But you can also unveil the exact list of cipher suites by compiling and running the linked Ciphers.java code.

javac Ciphers.java

java CiphersI will spare you all the work of finding, downloading, installing the correct java compiler, compiling the code and finally executing it. So, here is the list of all the ciphersuites available for TM1Web. The recommended ciphersuites are set in bold.

TLS v1.2

- SSL_DHE_DSS_WITH_AES_128_CBC_SHA

- SSL_DHE_DSS_WITH_AES_128_CBC_SHA256

- SSL_DHE_DSS_WITH_AES_128_GCM_SHA256

- SSL_DHE_DSS_WITH_AES_256_CBC_SHA

- SSL_DHE_DSS_WITH_AES_256_CBC_SHA256

- SSL_DHE_DSS_WITH_AES_256_GCM_SHA384

- SSL_DHE_RSA_WITH_AES_128_CBC_SHA

- SSL_DHE_RSA_WITH_AES_128_CBC_SHA256

- SSL_DHE_RSA_WITH_AES_128_GCM_SHA256

- SSL_DHE_RSA_WITH_AES_256_CBC_SHA

- SSL_DHE_RSA_WITH_AES_256_CBC_SHA256

- SSL_DHE_RSA_WITH_AES_256_GCM_SHA384

- SSL_ECDHE_ECDSA_WITH_AES_128_CBC_SHA

- SSL_ECDHE_ECDSA_WITH_AES_128_CBC_SHA256

- SSL_ECDHE_ECDSA_WITH_AES_128_GCM_SHA256

- SSL_ECDHE_ECDSA_WITH_AES_256_CBC_SHA

- SSL_ECDHE_ECDSA_WITH_AES_256_CBC_SHA384

- SSL_ECDHE_ECDSA_WITH_AES_256_GCM_SHA384

- SSL_ECDHE_RSA_WITH_AES_128_CBC_SHA

- SSL_ECDHE_RSA_WITH_AES_128_CBC_SHA256

- SSL_ECDHE_RSA_WITH_AES_128_GCM_SHA256

- SSL_ECDHE_RSA_WITH_AES_256_CBC_SHA

- SSL_ECDHE_RSA_WITH_AES_256_CBC_SHA384

- SSL_ECDHE_RSA_WITH_AES_256_GCM_SHA384

- SSL_ECDH_ECDSA_WITH_AES_128_CBC_SHA

- SSL_ECDH_ECDSA_WITH_AES_128_CBC_SHA256

- SSL_ECDH_ECDSA_WITH_AES_128_GCM_SHA256

- SSL_ECDH_ECDSA_WITH_AES_256_CBC_SHA

- SSL_ECDH_ECDSA_WITH_AES_256_CBC_SHA384

- SSL_ECDH_ECDSA_WITH_AES_256_GCM_SHA384

- SSL_ECDH_RSA_WITH_AES_128_CBC_SHA

- SSL_ECDH_RSA_WITH_AES_128_CBC_SHA256

- SSL_ECDH_RSA_WITH_AES_128_GCM_SHA256

- SSL_ECDH_RSA_WITH_AES_256_CBC_SHA

- SSL_ECDH_RSA_WITH_AES_256_CBC_SHA384

- SSL_ECDH_RSA_WITH_AES_256_GCM_SHA384

- SSL_RSA_WITH_AES_128_CBC_SHA

- SSL_RSA_WITH_AES_128_CBC_SHA256

- SSL_RSA_WITH_AES_128_GCM_SHA256

- SSL_RSA_WITH_AES_256_CBC_SHA

- SSL_RSA_WITH_AES_256_CBC_SHA256

- SSL_RSA_WITH_AES_256_GCM_SHA384

TLS v1.3

- TLS_AES_128_GCM_SHA256

- TLS_AES_256_GCM_SHA384

- TLS_CHACHA20_POLY1305_SHA256

- TLS_DHE_RSA_WITH_CHACHA20_POLY1305_SHA256

- TLS_ECDHE_ECDSA_WITH_CHACHA20_POLY1305_SHA256

- TLS_ECDHE_RSA_WITH_CHACHA20_POLY1305_SHA256

- TLS_EMPTY_RENEGOTIATION_INFO_SCSV

So what does this all mean? A ciphersuite is a combination of algorithms used in the SSL/TLS protocol for secure communication. Each ciphersuite name is structured to describe the algorithmic contents of it.

For example, the ciphersuite `SSL_ECDHE_ECDSA_WITH_AES_256_GCM_SHA384` is built with the following components:

- SSL: This indicates the protocol version. In this case, it's TLS v1.2 (Transport Layer Security).

- ECDHE: This stands for Elliptic Curve Diffie-Hellman Ephemeral. It's the key exchange method used in this ciphersuite. Diffie-Hellman key exchanges which use ephemeral (generated per session) keys provide forward secrecy, meaning that the session cannot be decrypted after the fact, even if the server's private key is known. Elliptic curve cryptography provides equivalent strength to traditional public-key cryptography while requiring smaller key sizes, which can improve performance.

- ECDSA: This stands for Elliptic Curve Digital Signature Algorithm. It's the algorithm used for server authentication. The server's certificate must contain an ECDSA-capable public key.

- AES_256: This indicates the symmetric encryption cipher used. In this case, it's AES (Advanced Encryption Standard) with 256-bit keys. This is reasonably fast and not broken.

- GCM: This stands for Galois/Counter Mode. It's a mode of operation for symmetric key cryptographic block ciphers. It's used here for the symmetric encryption cipher.

- SHA384: This is the hash function used. It's used for the Message Authentication Code (MAC) feature of the TLS ciphersuite. This is what guarantees that each message has not been tampered with in transit. SHA384 is a great choice.

Some ciphersuites in the list above are not recommended because of the following obsolete algorithms:

- DHE_DSS: This is an old key exchange algorithm that uses the Diffie-Hellman key exchange and the DSS (Digital Signature Standard) algorithm for digital signatures. It's not recommended for use because DSS is considered less secure than ECDSA (Elliptic Curve Digital Signature Algorithm), and Diffie-Hellman is not as efficient as Elliptic Curve Diffie-Hellman.

- RSA as server authentication: While RSA is still widely used, it's not recommended for use as both the key exchange and authentication algorithm. This is because it does not provide Perfect Forward Secrecy.

- CBC: This is a mode of operation for symmetric key cryptographic block ciphers. It's not recommended for use because it's vulnerable to padding oracle attacks, which allow an attacker to decrypt ciphertext without knowing the encryption key. Instead, it's recommended to use GCM (Galois/Counter Mode) or CCM (Counter with CBC-MAC).

- SHA (also known as SHA1): This is a hash function that is not recommended for use because it's considered to be weak and vulnerable to collision attacks. Instead, it's recommended to use SHA-256 or SHA-384.

faster and more secure TM1Web with TLS v1.3

TLS 1.3 offers several performance improvements over TLS 1.2. One of the key differences is the faster TLS handshake. Under TLS 1.2, the initial handshake was carried out in clear text, requiring encryption and decryption, which added considerable overhead to the connection. TLS 1.3, on the other hand, adopted server certificate encryption by default, which reduced this overhead and allowed for faster, more responsive connections.

Another performance improvement in TLS 1.3 is the Zero Round-Trip Time (0-RTT) feature. This feature eliminates an entire round-trip on the handshake, saving time and improving overall site performance. When accessing a site that has been visited previously, a client can send data on the first message to the server by leveraging pre-shared keys (PSK) from the prior session.

TLS 1.3 offers more robust security features compared to TLS 1.2. It supports cipher suites that do not include key exchange and signature algorithms, which are used in TLS 1.2. TLS 1.2 used ciphers with cryptographic weaknesses that had security vulnerabilities. TLS 1.3 also eliminates the security risk posed by a static key, which can compromise security if accessed illicitly. Furthermore, TLS 1.3 uses the Diffie-Hellman Ephemeral algorithm for key exchange, which generates a unique session key for every new session. This session key is one-time and is discarded at the end of every session, enhancing forward secrecy.

So, an optimal configuration for WLP server.xml will be:

<ssl id="CAM" keyStoreRef="CAMEncKeyStore" serverkeyAlias="encryption" enabledciphers="TLS_AES_128_GCM_SHA256 TLS_AES_256_GCM_SHA384 TLS_CHACHA20_POLY1305_SHA256 TLS_ECDHE_ECDSA_WITH_CHACHA20_POLY1305_SHA256 TLS_ECDHE_RSA_WITH_CHACHA20_POLY1305_SHA256" sslProtocol="TLSv1.3">Unfortunately, I haven't found a way to have both TLSv1.2 and TLSv1.3 protocols available at the same time in that configuration file. If you know how, please leave a comment with the details.

You can easily check the configuration is correct in Chrome, with Developer Tools -> Security -> Security Overview -> Connection: "The connection to this site is encrypted and authenticated using TLS 1.3"

On a side note, the configuration file for TM1Web is located at <TM1Web_install>\webapps\tm1web\WEB-INF\configuration\tm1web_config.xml.

tm1s.cfg parameters cheatsheet

tm1s.cfg parameters cheatsheetThe number of parameters in the tm1s.cfg has been steadily rising as more features have been made available over the years in TM1. So, a cheatsheet summarising all these parameters can help as an overview of the different settings that a TM1 server can be configured with.

This cheatsheet is now available in the following formats:

- csv so you can build your own variations of the cheatsheet

- tm1s.cfg including all parameters, only the required parameters are uncommented, every parameter is set to its known default value (as of 2023-02-14 and according to the IBM online documentation). There should not be a need to explicitly define a parameter when you just want to stick to the default value. However, be cautious that IBM may change default values and that could lead to issues when upgrading versions.

- md markdown to save in your personal knowledge management repository full of markdown files to ripgrep through fzf.vim

- pdf for an allegedly pretty overview (coming soon)

- html below or here on its own that you can "Save as" to use as a local copy.

The cheatsheet is generated with python scripts (coming soon) responsible for the conversion of the csv file to a pandas dataframe and then exported to different formats. The csv file currently holds the following fields:

- parameter: name of the parameter

- optional: indicates if the parameter is either required or optional.

- restart: indicates if the parameter is either dynamic or static 🔄, in the later case, it requires a service restart when its value has been modified

- url: URL address of the official IBM Planning Analytics documentation for that parameter

- default: default value of that parameter when it is not explicitly declared

- type: type of that parameter (bool, int, float, str, time). This will be useful for scripts checking tm1s.cfg validity

- context: arbitrary categorisation for the context of the parameter e.g. it can be Network related, Rules related, etc..

- dependency: that may hold the name of another parameter that the current parameter may rely on to operate

- description: short description about what the parameter does

The categorisation is completely arbitrary, most parameters could fit in several categories, so I just picked one that seemed most appropriate. This is a limitation and probably something to look into for the design of future versions of that csv file.

And finally, the tm1s.cfg parameters cheatsheet in html.

Note that there is a drop down menu at the top of the Context column. This allows you to show only parameters that relate to a specific aspect of your TM1 server. If you are on mobile, you probably need to rotate your screen for a wide display in order to display all 5 columns.

tm1s.cfg parameters cheatsheet

parameters in bold are required.

🔄 = static parameter, the TM1 service must be restarted for the new value of that parameter to take effect.

| parameter | default | restart | context |

description |

|---|---|---|---|---|

| AdminHost | 🔄 | Network | computer name or IP address of the Admin Host on which an Admin Server is running. | |

| AllowReadOnlyChoreReschedule | F | 🔄 | Chores | Provides users with READ access to a chore and the ability to activate deactivate and reschedule chores. |

| AllowSeparateNandCRules | F | 🔄 | Rules | if true then rule expressions for N: and C: levels can be on separate lines using identical AREA definitions. |

| AllRuleCalcStargateOptimization | F | 🔄 | Rules | if true then it can improve performance in calculating views that contain only rule-calculated values. In some unique cases enabling this parameter can result in performance degradation so you should test the effect of the parameter in a development environment before deploying to your production environment. |

| ApplyMaximumViewSizeToEntireTransaction | F | Views | Applies MaximumViewSize to the entire transaction instead of to individual calculations. | |

| AuditLogMaxFileSize | 100 MB | Logs and Messages | maximum file size that an audit log file can grow to before it is closed and a new file is created. | |

| AuditLogMaxQueryMemory | 100 MB | Logs and Messages | maximum amount of memory that TM1 can use when running an audit log query and retrieving the set of results. | |

| AuditLogOn | F | Logs and Messages | if true turns audit logging on. | |

| AuditLogUpdateInterval | 60 | Logs and Messages | maximum amount of minutes that TM1 waits before moving the events from the temporary audit file into the final audit log. | |

| AutomaticallyAddCubeDependencies | T | 🔄 | Rules | Determines if cube dependencies are set automatically or if you must manually identify the cube dependencies for each cube. |

| CacheFriendlyMalloc | F | 🔄 | Cache | Allows for memory alignment that is specific to the IBM Power Platform. |

| CalculationThresholdForStorage | 50 | Rules | minimum number of rule calculations required for a single cell or Stargate view beyond which TM1 stores the calculations for use during the current server session. | |

| CAMPortalVariableFile | 🔄 | Cognos Analytics | The path to the variables_TM1.xml file in your IBM Cognos installation. | |

| CAMUseSSL | F | 🔄 | Cognos Analytics | all communications between TM1 and the Cognos Analytics server must use SSL. |

| CheckCAMClientAlias | T | Cognos Analytics | When audit logging is enabled with the AuditLogOn parameter the CheckCAMClientAlias parameter determines whether user modifications within Cognos Authentication Manager (CAM) groups are written to the audit log. | |

| CheckFeedersMaximumCells | 3000000 | Rules | Limits the number of cells checked by the Check Feeders option in the Cube Viewer. | |

| ClientCAMURI | Cognos Analytics | The URI the Cognos Server Connection uses to authenticate TM1 clients. | ||

| ClientExportSSLSvrCert | F | 🔄 | Authentication and Encryption | TM1 client should retrieve the certificate authority certificate which was originally used to issue TM1 certificate from the MS Windows certificate store. |

| ClientExportSSLSvrKeyID | 🔄 | Authentication and Encryption | identity key used by a TM1 client to export the certificate authority certificate which was originally used to issue TM1 certificate from the MS Windows certificate store. | |

| ClientMessagePortNumber | 🔄 | Network | secondary port used to accept client messages concerning the progress and ultimate cancellation of a lengthy operation without tying up thread reserves. | |

| ClientPingCAMPassport | 900 | Cognos Analytics | interval in seconds that a client should ping the Cognos Authentication Management server to keep their passport alive. | |

| ClientPropertiesSyncInterval | Logs and Messages | frequency (in seconds) at which client properties are updated in the }ClientProperties control cube. Set to 1800 seconds to update cube every 30 minutes. | ||

| ClientVersionMaximum | Network | maximum client version that can connect to TM1. | ||

| ClientVersionMinimum | 8.4.00000 | Network | minimum client version that can connect to TM1. | |

| ClientVersionPrecision | 0 | Network | precisely identify the minimum and maximum versions of clients that can connect to TM1. | |

| CognosMDX.AggregateByAncestorRef | F | 🔄 | Cognos Analytics | When possible replaces aggregation over a member set with a reference to an ancestor if the aggregated member set comprises a complete set of descendants and all members have the weight 1. |

| CognosMDX.CellCacheEnable | T | 🔄 | Cognos Analytics | Allows the IBM Cognos MDX engine to modify TM1 consolidation and calculation cell cache strategies. |

| CognosMDX.PrefilterWithPXJ | F | 🔄 | Cognos Analytics | Expands the data source provider cross join approach to nested filtered sets. |

| CognosMDX.SimpleCellsUseOPTSDK | T | 🔄 | Cognos Analytics | Applies IBM Cognos MDX engine consolidation and calculation cell cache strategies to all cells in query results. |

| CognosMDX.UseProviderCrossJoinThreshold | 0 | 🔄 | Cognos Analytics | Applies the data source provider cross join strategy even if it is not explicitly enabled in Cognos Analytics. |

| CognosTM1InterfacePath | 🔄 | Cognos Analytics | location of the IBM Cognos Analytics server to use when importing data from a Cognos Package to TM1 configuration using the Package Connector. | |

| CreateNewCAMClients | T | Cognos Analytics | The CreateNewCAMClients server configuration parameter determines how TM1 configuration handles an attempt to log on to the server with CAM credentials in the absence of a corresponding TM1 client. | |

| DataBaseDirectory | 🔄 | Files | data directory from which the database loads cubes dimensions and other objects. | |

| DefaultMeasuresDimension | F | 🔄 | Cubes | Identifies if a measures dimension is created. TM1 does not require that a measures dimension be defined for a cube. You can optionally define a measures dimension by modifying the cube properties. |

| DisableMemoryCache | F | 🔄 | Cache | Disables the memory cache used by TM1 memory manager. |

| DisableSandboxing | F | Sandboxes | if false then users have the ability to use sandboxes across the server. | |

| DownTime | Chores | time when the database will come down automatically. | ||

| EnableNewHierarchyCreation | F | 🔄 | Dimensions | multiple hierarchy creation is enabled or disabled. |

| EnableSandboxDimension | F | Sandboxes | virtual sandbox dimension feature is enabled or disabled. | |

| EnableTIDebugging | F | Turbo Integrator | TurboIntegrator debugging capabilities are enabled or disabled. | |

| EventLogging | T | Logs and Messages | event logger is either turned on or off. | |

| EventScanFrequency | 1 | Logs and Messages | period to check the collection of threads where 1 is the minimum number and 0 disables the scan. | |

| EventThreshold.PooledMemoryInMB | 0 | Logs and Messages | threshold for which a message is printed for the event that the database's pooled memory exceeds a certain value. | |

| EventThreshold.ThreadBlockingNumber | 5 | Logs and Messages | warning is printed when a thread blocks at least the specified number of threads. | |

| EventThreshold.ThreadRunningTime | 600 | Logs and Messages | warning is printed when a thread has been running for the specified length of time. | |

| EventThreshold.ThreadWaitingTime | 20 | Logs and Messages | warning is printed when a thread has been blocked by another thread for the specified length of time. | |

| ExcelWebPublishEnabled | F | TM1Web | Enables the publication of MS Excel files to IBM Cognos TM1 Web as well as the export of MS Excel files from TM1 Web when MS Excel is not installed on the web server. Enable the ExcelWebPublishEnabled parameter when you have TM1 10.1 clients that connect to TM1 10.2.2 servers. | |

| FileRetry.Count | 5 | Logs and Messages | number of retry attempts. | |

| FileRetry.Delay | 2000 | Logs and Messages | time delay between retry attempts. | |

| FileRetry.FileSpec | Logs and Messages | |||

| FIPSOperationMode | 2 | 🔄 | Authentication and Encryption | Controls the level of support for Federal Information Processing Standards (FIPS). |

| ForceReevaluationOfFeedersForFedCellsOnDataChange | F | 🔄 | Rules | When this parameter is set a feeder statement is forced to be re-evaluated when data changes. |

| HTTPOriginAllowList | Network | a comma-delimited list of external origins (URLs) that are trusted and can access TM1. | ||

| HTTPPortNumber | 5001 | 🔄 | Network | port number on which TM1 listens for incoming HTTP(S) requests. |

| HTTPRequestEntityMaxSizeInKB | 32 | Network | This TM1 configuration parameter sets the maximum size for an HTTP request entity that can be handled by TM1. | |

| HTTPSessionTimeoutMinutes | 20 | Authentication and Encryption | timeout value for authentication sessions for TM1 REST API. | |

| IdleConnectionTimeOutSeconds | Authentication and Encryption | timeout limit for idle client connections in seconds. | ||

| IndexStoreDirectory | 🔄 | Files | Added in v2.0.5 Designates a folder to store index files including bookmark files. | |

| IntegratedSecurityMode | Authentication and Encryption | the user authentication mode to be used by TM1. | ||

| IPAddressV4 | 🔄 | Network | IPv4 address for an individual TM1 service. | |

| IPAddressV6 | 🔄 | Network | IPv6 address for an individual TM1 service. | |

| IPVersion | ipv4 | 🔄 | Network | Internet Protocol version used by TM1 to identify IP addresses on the network. |

| JavaClassPath | 🔄 | Java | parameter to make third-party Java™ libraries available to TM1. | |

| JavaJVMArgs | 🔄 | Java | list of arguments to pass to the Java Virtual Machine (JVM). Arguments are separated by a space and the dash character. For example JavaJVMArgs=-argument1=xxx -argument2=yyy. | |

| JavaJVMPath | 🔄 | Java | path to the Java Virtual Machine .dll file (jvm.dll) which is required to run Java from TM1 TurboIntegrator. | |

| keyfile | 🔄 | Authentication and Encryption | file path to the key database file. The key database file contains the server certificate and trusted certificate authorities. The server certificate is used by TM1 and the Admin server. | |

| keylabel | 🔄 | Authentication and Encryption | label of the server certificate in the key database file. | |

| keystashfile | 🔄 | Authentication and Encryption | file path to the key database password file. The key database password file is the key store that contains the password to the key database file. | |

| Language | 🔄 | Logs and Messages | language used for TM1. This parameter applies to messages generated by the server and is also used in the user interface of the server dialog box when you run the server as an application instead of a Windows service. | |

| LDAPHost | localhost | 🔄 | Authentication and Encryption | domain name or dotted string representation of the IP address of the LDAP server host. |

| LDAPPasswordFile | 🔄 | Authentication and Encryption | password file used when LDAPUseServerAccount is not used. This is the full path of the .dat file that contains the encrypted password for the Admin Server's private key. | |

| LDAPPasswordKeyFile | 🔄 | Authentication and Encryption | password key used when LDAPUseServerAccount is not used. | |

| LDAPPort | 389 | 🔄 | Authentication and Encryption | port TM1 uses to bind to an LDAP server. |

| LDAPSearchBase | 🔄 | Authentication and Encryption | node in the LDAP tree where TM1 begins searching for valid users. | |

| LDAPSearchField | cn | 🔄 | Authentication and Encryption | The name of the LDAP attribute that is expected to contain the name of TM1 user being validated. |

| LDAPSkipSSLCertVerification | F | 🔄 | Authentication and Encryption | Skips the certificate trust verification step for the SSL certificate used to authenticate to an LDAP server. This parameter is applicable only when LDAPVerifyServerSSLCert=T. |

| LDAPSkipSSLCRLVerification | F | 🔄 | Authentication and Encryption | Skips CRL checking for the SSL certificate used to authenticate to an LDAP server. This parameter is applicable only when LDAPVerifyServerSSLCert=T. |

| LDAPTimeout | 0 | Authentication and Encryption | number of seconds that TM1 waits to complete a bind to an LDAP server. If the LDAPTimeout value is exceeded TM1 immediately aborts the connection attempt. | |

| LDAPUseServerAccount | 🔄 | Authentication and Encryption | Determines if a password is required to connect to TM1 when using LDAP authentication. | |

| LDAPVerifyCertServerName | F | 🔄 | Authentication and Encryption | server to use during the SSL certificate verification process for LDAP server authentication. This parameter is applicable only when LDAPVerifyServerSSLCert=T. |

| LDAPVerifyServerSSLCert | F | 🔄 | Authentication and Encryption | Delegates the verification of the SSL certificate to TM1. This parameter is useful for example when you are using LDAP with a proxy server. |

| LDAPWellKnownUserName | 🔄 | Authentication and Encryption | user name used by TM1 to log in to LDAP and look up the name submitted by the user. | |

| LoadPrivateSubsetsOnStartup | F | 🔄 | Subsets | This configuration parameter determines if private subsets are loaded when TM1 starts. |

| LoadPublicViewsAndSubsetsAtStartup | T | 🔄 | Views | Added in v2.0.8 This configuration parameter enables whether public subsets and views are loaded when the TM1 starts and keeps them loaded to avoid lock contention during the first use. |

| LockPagesInMemory | F | 🔄 | deprecated | Deprecated as of IBM TM1 with Watson version 2.0.9.7 When this parameter is enabled memory pages used by TM1 process are held in memory (locked) and do not page out to disk under any circumstances. This retains the pages in memory over an idle period making access to TM1 data faster after the idle period. |

| LoggingDirectory | 🔄 | Logs and Messages | directory to which TM1 saves its log files. | |

| LogReleaseLineCount | 5000 | Logs and Messages | number of lines that a search of the Transaction Log will accumulate in a locked state before releasing temporarily so that other Transaction Log activity can proceed. | |

| MagnitudeDifferenceToBeZero | 🔄 | Calculations | order of magnitude of the numerator relative to the denominator above which the denominator equals zero when using a safe division operator. | |

| MaskUserNameInServerTools | T | 🔄 | Logs and Messages | Determines whether usernames in server administration tools are masked until a user is explicitly verified as having administrator access. |

| MaximumCubeLoadThreads | 0 | 🔄 | Multithreading | Specifies whether the cube load and feeder calculation phases of server loading are multi-threaded so multiple processor cores can be used in parallel. |

| MaximumLoginAttempts | 3 | Authentication and Encryption | maximum number of failed user login attempts permissible on TM1. | |

| MaximumMemoryForSubsetUndo | 10240 | Cache | maximum amount of memory in kilobytes to be dedicated to storing the Undo/Redo stack for the Subset Editor. | |

| MaximumSynchAttempts | 1 | 🔄 | Network | maximum number of times a synchronization process on a planet database will attempt to reconnect to a network before the process fails. |

| MaximumTILockObjects | 2000 | 🔄 | Turbo Integrator | This configuration parameter sets the maximum lock objects for a TurboIntegrator process. Used by the synchronized() TurboIntegrator function. |

| MaximumUserSandboxSize | 500 MB | Sandboxes | maximum amount of RAM memory (in MB) to be allocated per user for personal workspaces or sandboxes. | |

| MaximumViewSize | 500 MB | Views | maximum amount of memory (in MB) to be allocated when a user accesses a view. | |

| MDXSelectCalculatedMemberInputs | T | MDX | Changes the way in which calculated members in MDX expressions are handled when zero suppression is enabled. | |

| MemoryCache.LockFree | F | Cache | Switches global garbage collection to use lock free structures. | |

| MessageCompression | T | 🔄 | Network | Enables message compression for large messages that significantly reduces network traffic. |

| MTCubeLoad | F | Multithreading | Enables multi-threaded loading of individual cubes. | |

| MTCubeLoad.MinFileSize | 10KB | Multithreading | minimum size for cube files to be loaded on multiple threads. | |

| MTCubeLoad.UseBookmarkFiles | F | Multithreading | Enables the persisting of bookmarks on disk. | |

| MTCubeLoad.Weight | 10 | Multithreading | number of atomic operations needed to load a single cell. | |

| MTFeeders | F | Multithreading | Applies multi-threaded query parallelization techniques to the following processes: the CubeProcessFeeders TurboIntegrator function cube rule updates and construction of multi-threaded (MT) feeders at start-up. | |

| MTFeeders.AtStartup | F | Multithreading | If the MTFEEDERS configuration option is enabled enabling MTFeeders.AtStartup applies multi-threaded (MT) feeder construction during server start-up. | |

| MTFeeders.AtomicWeight | 10 | Multithreading | number of required atomic operations to process feeders of a single cell. | |

| MTQ | -1 | Multithreading | maximum number of threads per single-user connection when multi-threaded optimization is applied. Used when processing queries and in batch feeder and cube load operations. | |

| MTQ.OperationProgressCheckSkipLoopSize | 10000 | Multithreading | fine-tune multi-threaded query processing. | |

| MTQ.SingleCellConsolidation | T | Multithreading | fine-tune multi-threaded query processing. | |

| MTQQuery | T | Multithreading | enable multi-threaded query processing when calculating a view to be used as a TurboIntegrator process datasource. | |

| NetRecvBlockingWaitLimitSeconds | 0 | 🔄 | Network | have the database perform the wait period for a client to send the next request as a series of shorter wait periods. This parameter changes the wait from one long wait period to shorter wait periods so that a thread can be canceled if needed. |

| NetRecvMaxClientIOWaitWithinAPIsSeconds | 0 | 🔄 | Network | maximum time for a client to do I/O within the time interval between the arrival of the first packet of data for a set of APIs through processing until a response has been sent. |

| NIST_SP800_131A_MODE | T | 🔄 | Authentication and Encryption | the database must operate in compliance with the SP800-131A encryption standard. |

| ODBCLibraryPath | 🔄 | ODBC | name and location of the ODBC interface library (.so file) on UNIX. | |

| ODBCTimeoutInSeconds | 0 | ODBC | timeout value that is sent to the ODBC driver using the SQL_ATTR_QUERY_TIMEOUT and SQL_ATTR_CONNECTION_TIMEOUT connection attributes. | |

| OptimizeClient | 0 | Cache | Added in v2.0.7 This parameter determines whether private objects are loaded when the user authenticates during TM1 startup. | |

| OracleErrorForceRowStatus | 0 | 🔄 | ODBC | ensure the correct interaction between TurboIntegrator processes and Oracle ODBC data sources. |

| PasswordMinimumLength | Authentication and Encryption | minimum password length for clients accessing TM1. | ||

| PasswordSource | TM1 | 🔄 | Authentication and Encryption | Compares user-entered password to the stored password. This parameter is applicable only to TM1s on cloud or local. It is not applicable to TM1 Engine in Cloud Pak for Data or Amazon Web Services. |

| PerfMonIsActive | T | Logs and Messages | turn updates to TM1 performance counters on or off. | |

| PerformanceMonitorOn | F | Logs and Messages | Automatically starts populating the }Stats control cubes when a TM1 starts. | |

| PersistentFeeders | F | 🔄 | Rules | To improve reload time of cubes with feeders set the PersistentFeeders configuration parameter to true (T) to store the calculated feeders to a .feeders file. |

| PortNumber | 12345 | 🔄 | Network | server port number used to distinguish between multiple TM1s running on the same computer. |

| PreallocatedMemory.BeforeLoad | F | Cache | Added in v2.0.5 Specifies whether the preallocation of memory occurs before TM1 loading or in parallel. | |

| PreallocatedMemory.Size | 0 | Cache | Added in v2.0.5 Triggers the preallocation of pooled TM1 memory. | |

| PreallocatedMemory.ThreadNumber | 4 | Multithreading | Added in v2.0.5 Specifies the number of threads used for preallocation memory in multi-threaded cube loading. | |

| PrivilegeGenerationOptimization | F | 🔄 | Cache | When TM1 generates security privileges from a security control cube it reads every cell from that cube. |

| ProgressMessage | T | 🔄 | Logs and Messages | whether users have the option to cancel lengthy view calculations. |

| ProportionSpreadToZeroCells | T | 🔄 | Logs and Messages | Allows you to perform a proportional spread from a consolidation without generating an error when all the leaf cells contain zero values. |

| PullInvalidationSubsets | T | Subsets | Reduces metadata locking by not requiring an R-lock (read lock) on the dimension during subset creation deletion or loading from disk. | |

| RawStoreDirectory | Logs and Messages | location of the temporary unprocessed log file for audit logging if logging takes place in a directory other than the data directory. | ||

| ReceiveProgressResponseTimeoutSecs | Network | The ReceiveProgressResponseTimeoutSecs parameter configures TM1 to sever the client connection and release resources during a long wait for a Cancel action. | ||

| ReduceCubeLockingOnDimensionUpdate | F | 🔄 | Dimensions | Reduces the occurrence of cube locking during dimension updates. |

| RulesOverwriteCellsOnLoad | F | 🔄 | Rules | Prevents cells from being overwritten on TM1 load in rule-derived data. |

| RunningInBackground | F | 🔄 | Linux | When you add the line RunningInBackground=T to TM1 configuration TM1 on UNIX runs in background mode. |

| SaveFeedersOnRuleAttach | T | Rules | When set to False postpones writing to feeder files until SaveDataAll and CubeDataSave are called instead of updating the files right after rules are changed and feeders are generated at TM1 start time. | |

| SaveTime | Chores | time of day to execute an automatic save of TM1 data; saves the cubes every succeeding day at the same time. As with a regular shutdown SaveTime renames the log file opens a new log file and continues to run after the save. | ||

| SecurityPackageName | 🔄 | Authentication and Encryption | If you configure TM1 to use Integrated Login the SecurityPackageName parameter defines the security package that authenticates your user name and password in MS Windows. | |

| ServerCAMURI | Cognos Analytics | URI for the internal dispatcher that TM1 should use to connect to Cognos Authentication Manager (CAM). | ||

| ServerCAMURIRetryAttempts | 3 | 🔄 | Cognos Analytics | number of attempts made before moving on to the next ServerCAMURI entry in TM1 configuration. |

| ServerLogging | F | Logs and Messages | Generates a log with the security activity details on TM1 that are associated with Integrated Login. | |

| ServerName | 🔄 | Network | name of the server. If you do not supply this parameter TM1 names the server Local and treats it as a local server. | |

| ServicePrincipalName | 🔄 | Authentication and Encryption | service principal name (SPN) when using Integrated Login with TM1 Web and constrained delegation. | |

| SpreadErrorInTIDiscardsAllChanges | F | 🔄 | Turbo Integrator | If SpreadErrorInTIDiscardsAllChanges is enabled and a spreading error occurs as part of a running TurboIntegrator script all changes that were made by that TurboIntegrator script are discarded. |

| SpreadingPrecision | 1e-8 | Calculations | Use the SpreadingPrecision parameter to increase or decrease the margin of error for spreading calculations. The SpreadingPrecision parameter value is specified with scientific (exponential) notation. | |

| SQLRowsetSize | 50 | ODBC | Added in v2.0.3 Specifies the maximum number of rows to retrieve per ODBC request. | |

| SSLCertAuthority | 🔄 | Authentication and Encryption | name of TM1's certificate authority file. This file must reside on the computer where TM1 is installed. | |

| SSLCertificate | 🔄 | Authentication and Encryption | full path of TM1's certificate file which contains the public/private key pair. | |

| SSLCertificateID | 🔄 | Authentication and Encryption | name of the principal to whom TM1's certificate is issued. | |

| StartupChores | 🔄 | Chores | StartupChores is a configuration parameter that identifies a list of chores that run at database startup. | |

| SubsetElementBreatherCount | -1 | Subsets | handles locking behavior for subsets. | |

| SupportPreTLSv12Clients | F | 🔄 | Authentication and Encryption | As of TM1 10.2.2 Fix Pack 6 (10.2.2.6) all SSL-secured communication between clients and databases in TM1 uses Transport Layer Security (TLS) 1.2. This parameter determines whether clients prior to 10.2.2.6 can connect to the 10.2.2.6 or later TM1 server. |